第一个爬取多页式的python小程序之爬取电影天堂最新电影前七页所有电影的详情页"/>

第一个爬取多页式的python小程序之爬取电影天堂最新电影前七页所有电影的详情页"/>

我的第一个爬取多页式的python小程序之爬取电影天堂最新电影前七页所有电影的详情页

爬取了电影天堂最新电影里面的前七页所有电影的详情页面,并逐条写入到excel

import requests

from lxml import etree

import pandas as pdurl = '.html'

HEADERS = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36'}

MOVIE = {}def get_main_url(url):"""获取传入url里获取的所有电影列表的详情页面的url"""ori_url = ''resp = requests.get(url=url, headers=HEADERS)text = resp.content.decode("gbk", 'ignore') # 可能有gbk都处理不了的特殊字符,必须传入ignore参数html = etree.HTML(text)tables = html.xpath("//table[@class='tbspan']")det_url_list = []for table in tables:detail_ur = table.xpath(".//a/@href")[0]detail_urls = ori_url + detail_urdet_url_list.append(detail_urls)return det_url_listdef get_detail_page(url, i):resp = requests.get(url=url, headers=HEADERS)text = resp.content.decode("gbk", 'ignore')html = etree.HTML(text)MOVIE['索引'] = [i]title = html.xpath("//div[@class='title_all']//font[@color='#07519a']/text()")[0]MOVIE['电影名'] = titlezooms = html.xpath("//div[@id='Zoom']")[0]try:poster = zooms.xpath(".//img/@src")[0]MOVIE['海报地址'] = posterexcept IndexError:MOVIE['海报地址'] = ['获取失败']def parser_cont(cont, rule):return content.replace(rule, '').strip()contents = zooms.xpath(".//text()")for index, content in enumerate(contents):if content.startswith('◎产 地'):content = parser_cont(content, '◎产 地')MOVIE['制片国家'] = contentelif content.startswith('◎类 别'):content = parser_cont(content, '◎类 别')MOVIE['类别'] = contentelif content.startswith('◎上映日期'):content = parser_cont(content, '◎上映日期')MOVIE['上映日期'] = contentelif content.startswith('◎豆瓣评分'):content = parser_cont(content, '◎豆瓣评分')MOVIE['豆瓣评分'] = contentelif content.startswith('◎片 长'):content = parser_cont(content, '◎片 长')MOVIE['片长'] = contentelif content.startswith('◎主 演'):content = parser_cont(content, '◎主 演')actors = [content]MOVIE['主演'] = actorsfor x in range(index + 1, len(contents)):actor_main = contents[x].strip()if actor_main.startswith('◎'):breakactors.append(actor_main)return MOVIEdef spider():i = 0base_url = '{}.html'for x in range(1, 8):# 第一个for循环用来获取七页电影列表的urlurl = base_url.format(x)det_url_list = get_main_url(url)for det_url in det_url_list:# 这里的for循环用来获取每一页包含的每一部电影的详情页面的urlget_detail_page(det_url, i)i += 1print(MOVIE)movies = pd.read_excel('./movie.xlsx')movie = pd.Series(MOVIE, name='i')movies = movies.append(movie, ignore_index=True)movies.to_excel('./movie.xlsx')if __name__ == "__main__":spider()出现的问题

1.对于xpath的语法还不是很熟练

2.对于excel的写入还不是很熟悉,最后写入的excel每行都包含很多的无用行,不知道什么原因,改了大半天仍旧有问题,留待以后解决。

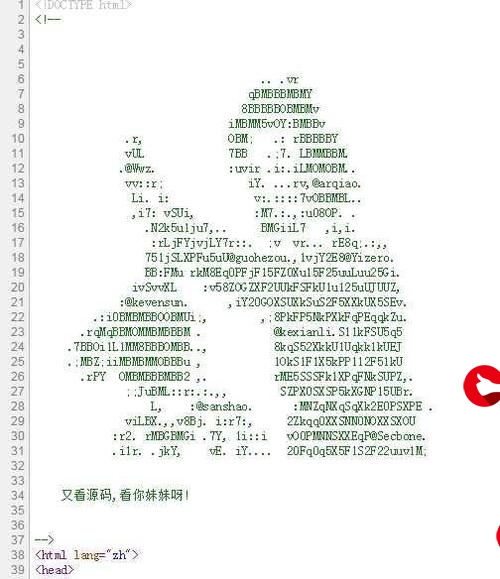

错误如下:

更多推荐

我的第一个爬取多页式的python小程序之爬取电影天堂最新电影前七页所有电影的详情页

发布评论